10X Faster Web Data Extraction using AI Website Scraper

Estimated Reading Time 9 min

AIMLEAP Automation Works Startups | Digital | Innovation| Transformation

Table of Contents

1. Introduction

2. Understanding AI-Web Scraping

- A. What is AI—Driven Web Scraping?

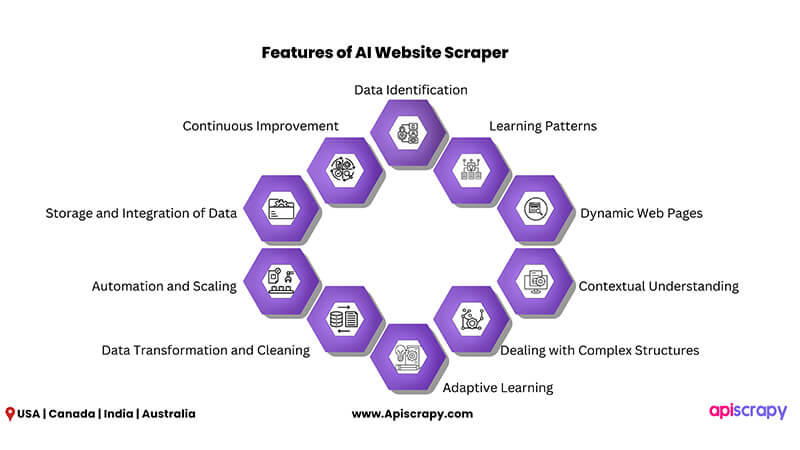

- B. Features of an AI Website Scraper

- C. Challenges of Traditional Web Scraping Methods

- D. Factors to Consider When Selecting an AI Website Scraper

3. Case Studies Showcasing the Power of AI Website Scraper by APISCRAPY

4. Future Trends in AI-Web Scraping

5. Understanding AI Model and It’s Applications in Data Collection

- A. What is an AI Model?

- B. The Significance of AI Model Training in Businesses

- C. Common AI Models and Their Data Extraction Applications

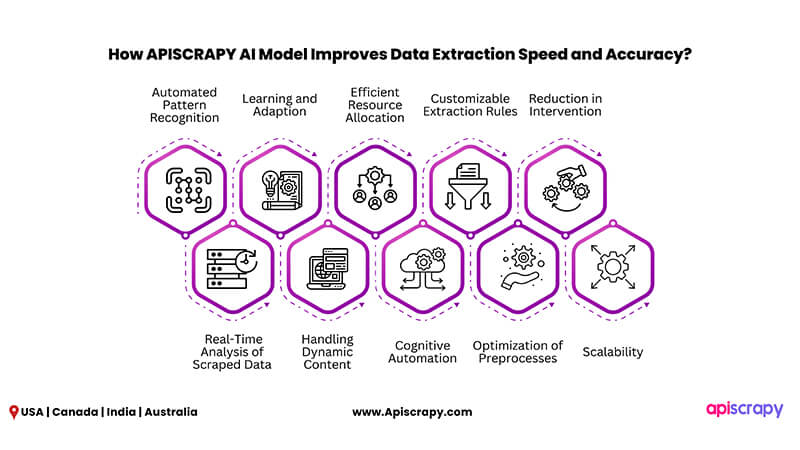

- D. How APISCRAPY AI Model Improves Data Extraction Speed and Accuracy?

6. AI Training Data Sets: The Foundation of Machine Learning

7. Web Data Extraction: Leave Everything to AI or Combine It with Human Interaction

The internet has evolved into a treasure trove of information in the digital era. Also, extracting valuable data from websites is critical for organizations across industries. To stand as a great contender in the business world, speed and efficiency are a must. Hence, businesses are moving towards automated data extraction using AI website scraper tools. Let’s look at the significance, processes, and uses of AI web data extraction today.

In automated web scraping, Robotic Process Automation (RPA) bots perform repetitive tasks. In this process, the bots mimic human actions in the graphical user interface (GUI). These include the search for URLs as well as the extraction and saving of quality data.

Understanding AI-Web Scraping

A. What is AI—Driven Web Scraping?

AI-driven web scraping involves automation of obtaining data from websites and web pages. It uses artificial intelligence (AI) and machine learning technologies for automation. It goes above and beyond typical online scraping methods by combining AI algorithms that allow for more advanced and efficient data extraction, even from complicated and dynamically changing websites.

B. Features of an AI Website Scraper

1. Data Identification

At first, the AI website scraper identifies the data pieces to collect from a target website. Text, photos, tables, links, and other data items may be included.

2. Learning Patterns

In order to discover patterns and structures often seen in online pages, AI algorithms are trained on a varied selection of websites. They learn to recognize HTML tags, CSS selectors, and other properties that indicate the location of the needed data.

3. Dynamic Web Pages

Traditional website scrapers fail with websites that use JavaScript for dynamic content loading. But an AI website scraper interacts with the website and retrieves data from dynamically created sections using techniques such as headless browsers or browser automation.

4. Contextual Understanding

AI website scrapers can do more than just extract data. They can recognize product names, pricing, and descriptions, as well as discern between headers and content in news articles.

5. Dealing with Complex Structures

Many websites have complex layouts and nested structures. An AI website scraper can explore these hierarchies, recognizing the correct pieces for extraction even if they are deep within the page’s hierarchy.

6. Adaptive Learning

An AI website scraper is built to adapt to changes in website layout. So, while updating or redesigning websites, AI algorithms may modify and relearn the new patterns to ensure that data extraction remains correct.

7. Data Transformation and Cleaning

To make the data useful, it is important to transform the extracted data. Furthermore, to ensure that the extracted data is consistent and organized, an AI website scraper can undertake data cleaning, normalization, and transformation operations.

8. Automation and Scaling

An AI website scraper can automate the process of visiting several pages on a website, extracting data, and moving on to the next page. This leads to efficient scraping of big databases.

9. Storage and Integration of Data

Data can be saved in databases, spreadsheets, or other systems for future study after the extraction. An AI website scraper can work in series with data storage and processing systems to provide seamless data utilization.

10. Continuous Improvement

AI models in web scraping may continuously learn from new data and adapt to changes, boosting data extraction accuracy and efficiency over time.

C. Challenges of Traditional Web Scraping Methods

Traditional web scraping methods frequently have drawbacks that limit efficiency, accuracy, and scalability. Here are some of the major issues with standard web scraping:

1. Changes to the Website Structure

Websites routinely undergo design upgrades or structural changes. This leads traditional scrapers to break and necessitate constant tweaks to remain operational.

2.Dynamic Content

Many modern websites contain dynamic content loaded via JavaScript, which traditional scrapers may struggle with. This leads to insufficient or incorrect data extraction.

3.IP Blocking and Captchas

To avoid scraping, websites use safeguards such as IP blocking or displaying captchas, making it harder for traditional scrapers to access and extract data.

4.Data Volume and Rate

Traditional scrapers may not be able to handle vast amounts of data efficiently, and their extraction speed may be slower than that of newer systems.

5.Maintenance Overhead

Regular maintenance and updates are crucial to keep traditional scrapers running. This leads to taking resources away from key company tasks.

6.Restricted Customization

Traditional scrapers may be unable to extract certain data points or formats required by enterprises, resulting in data collection limits.

7.Data Accuracy

Inaccuracies and inconsistencies might occur as a result of coding errors, misinterpretation of website modifications, or limits in dealing with various data types.

8.Legal and Ethical Issues

Traditional scraping methods may unwittingly breach terms of service or intellectual property regulations, potentially resulting in legal ramifications.

9.Scalability Concerns

Traditional scrapers may struggle to scale efficiently as data sources and requirements rise, resulting in performance bottlenecks.

10.High Resource Requirement

Manual coding and management of classic scrapers can be time-consuming and labor-intensive, necessitating dedicated time and expertise. However, you can alleviate these resource challenges by considering alternatives such as using a free web crawler to replace your in-house development team.

11.Inadequate Real-Time Updates

Traditional approaches may not deliver real-time or near-real-time data updates, which might affect the timeliness of insights and decision-making.

12.User Interaction Handling

Websites that need user interactions, such as login credentials or form submissions, can be difficult for typical scrapers to handle.

In response to these problems, organizations are increasingly turning to AI website scraper tools that provide automation, adaptability, and improved accuracy. These modern solutions overcome the constraints of old methods, allowing businesses to harvest and use web data in more accurate and efficient ways.

D. Factors to Consider When Selecting an AI Website Scraper

It is critical to select the best AI website scraper to ensure rapid and reliable data extraction. To make an informed decision, several things should be considered as below:

1. Data Complexity and Source Variety

Consider the types of data for extraction as well as the variety of sources (websites, APIs, documents). Check if the scraping tool can handle a variety of data formats and structures.

2. Usability and User Interface

A user-friendly UI makes AI website scraper uptake easier. Look for tools with simple navigation and configuration that require little technical knowledge.

3. AI and Machine Learning Capabilities

Examine the AI and machine learning capabilities of the web scraper. Is it responsive to website changes? Is it capable of handling unstructured data such as text or images?

4. Speed of Data Extraction

In data extraction, speed is critical. Choose a technology that provides quick extraction, especially if you need real-time or large-scale data.

5. Dynamic Content Management

Because many modern websites utilize JavaScript to load dynamic content, make sure the tool can extract data from them.

6.Validation of Data and Quality Control

In order to ensure accurate and trustworthy data extraction, look for an AI website scraper with built-in data validation and quality control procedures.

7. Customization Possibilities

Can you tailor an AI website scraper to your specific data extraction needs? Configuration flexibility is critical for customized extraction.

8. Scalability and Performance

Check to see if the AI website scraper can handle your data volume and scale as your requirements develop while maintaining constant performance.

9. Security and Compliance

Consider the tool’s data security safeguards and compliance with legal and ethical norms, particularly when dealing with sensitive data.

Our blog post on “Scrape the Data Responsibly: Principles to Practice” offers valuable insights into this aspect.

10. Technical Assistance and Updates

Reliable technical support and regular updates are essential for diagnosing problems and keeping the tool up to date.

11. Interoperability with Other Tools

Check to see if an AI web scraper interfaces with your existing data analysis, visualization, or database technologies to ensure a smooth workflow integration.

12. Learning Curve

Assess how quickly your staff will be able to learn how to use the product efficiently. This is because adoption may be hampered by a steep learning curve.

13. Price and Cost Structure

Determine whether the price plan is based on subscription, usage, or offers a free trial. Consider whether it fits inside your budget.

14. Reputation and Reviews of Vendors

Check for the tool provider’s reputation, read user reviews, and ask for recommendations for selecting a guaranteed dependable AI website scraper.

15. Trial Period

Choose tools with a trial period whenever available. This allows you to evaluate the tool’s suitability for your needs before making a purchase.

16. Future-Proofing

Take into account the tool’s potential to adapt to future technological advances, ensuring that it remains relevant as AI and web technologies improve.

For insights into how APISCRAPY’s site scraper technology is future-ready and adaptable to evolving web landscapes, you can explore our article on Future-Ready Site Scraping Transformation

By carefully weighing these characteristics, you may choose an AI web scraper that meets your data extraction demands, improves efficiency, and produces accurate and relevant insights for your organization.

Case Studies Showcasing the Power of AI Website Scraper by APISCRAPY

The incorporation of powerful AI algorithms in online scraping has resulted in dramatic results across multiple industries. These case studies by APISCRAPY show how companies have used advanced AI website scrapers to drive innovation, obtain insights, and make educated decisions.

1. Pricing Optimization for E-Commerce

In order to obtain rival pricing data from several websites, an e-commerce company used an AI website scraper. They analyzed the data using machine learning techniques to dynamically modify their own prices in real time. This optimization method resulted in greater competitiveness, profit margins, and client retention.

2. Financial Market Intelligence

A financial institution gathered news stories, social media mentions, and financial reports about specific stocks using powerful AI website scraper. They anticipated stock price movements and generated insights on market sentiment using natural language processing, sentiment analysis, and predictive modelling. These insights aided investment decisions and risk mitigation.

3. Drug Monitoring in Healthcare

A pharmaceutical company used advanced AI algorithms to harvest and analyze data from medical forums, patient evaluations, and clinical trial reports. Natural language processing algorithms detected adverse drug reactions. As a result, the company was able to address safety issues, improve drug development, and maintain patient safety.

4. Analysis of the Real Estate Market

A real estate agency collected property listings, historical sales data, and demographic information from real estate websites using powerful AI website scraper by APISCRAPY. They forecasted property values using machine learning algorithms based on characteristics such as location, amenities, and market trends. This enabled buyers, sellers, and investors to make educated selections.

5. Aggregation of News Content

A media company used APISCRAPY’s AI website scraper to acquire relevant news articles, blogs, and social media information. They generated customized news feeds for their audience by categorizing and summarizing the content. Due to this personalized strategy, their user retention and engagement improved.

Future Trends in AI-Web Scraping

AI-web scraping is quickly evolving and is driven by technological improvements and the growing demand for meaningful insights from web data. Here are some new technologies and ideas affecting the AI-web scraping landscape

1. Edge AI for On-Device Scraping

Edge AI includes computing AI on local devices rather than in the cloud. This approach is being extended to online scraping. This enables the devices to acquire and analyze data autonomously without constant dependency on cloud services.

2. Improved Natural Language Processing (NLP)

Techniques such as sentiment analysis, entity recognition, and language translation are advancing NLP. AI website scrapers are improving their ability to extract relevant insights from textual content, making them useful for sentiment analysis and content summarizing.

3. Visual Web Scraping

Visual scraping is the process of extracting data from photos and videos on web pages using computer vision. This concept is becoming popular for applications such as extracting product information from photographs, identifying items, and even interpreting text inside images.

4. Legal AI-Web Scraping

Because worries about privacy develop, there is a greater emphasis on ethical data scraping. AI-powered solutions are being created to ensure that scraped data respects privacy, follows terms of service, and does not capture sensitive or personal information.

5. Aggregation and Meta-Search

In order to aggregate data from different sources, meta-search engines and aggregation technologies are emerging. These apps use AI approaches to curate and deliver extensive information to consumers in a single interface.

6. Data Integrity and Blockchain

Blockchain technology is being investigated in order to improve data integrity and security in online scraping. It can provide an immutable record of data sources and assure the accuracy of information acquired.

7. Data Labeling Automation

AI-powered data labeling tools are making it easier to produce labelled data sets for machine learning models by easing the process of annotating scraped data.

8. AI-Web Scraping Platforms with Low Code

Users with modest programming expertise can leverage low-code platforms to create custom AI-web scraping solutions. This democratizes the process and allows more people to benefit from the potential of AI-web scraping.

9. Models of Continuous Learning

Continuous learning models in AI-web scraping technologies adapt to changes in site design, user behavior, and data patterns over time. This ensures that extraction is accurate even in dynamic online contexts.

10. Explanatory AI for Transparency

To provide insights into how AI models make decisions, explainable AI techniques are being integrated into web scraping technologies. This increases transparency and trust in the outcomes.

11. Hybrid AI-Web Scraping Methodologies

To assure accurate and ethical data extraction, combining AI approaches with human oversight is gaining appeal. Hybrid techniques capitalize on the advantages of both AI and human skills.

12. Data Scraping in Real Time

With the increased demand for real-time data, AI website scrapers are changing to stream data as it becomes available, allowing for rapid insights and timely decision-making.

These rising technologies and trends are transforming the AI-web scraping environment, allowing businesses to access new opportunities, acquire deeper insights, and stay ahead in an increasingly data-driven world.

Understanding AI Model and It’s Applications in Data Collection

A. What is an AI Model?

An artificial intelligence model is a program or algorithm that uses training data to recognize patterns and make predictions or judgements. We now use AI training models for a variety of analytical and decision-making tasks. The more data points an AI model collects, the more accurate its data analysis and forecasting may be.

AI models depend on natural language processing, machine learning and computer vision for recognizing different patterns. The primary goal of an AI model is to solve business challenges, which it accomplishes by guaranteeing that the algorithm designed for this purpose can reason over and learn from the data.

B. The Significance of AI Model Training in Businesses

In business, data and artificial intelligence are gaining significance. Companies rely on AI model training to make sense of the massive amounts of data that are being produced at an unprecedented rate. After introducing AI model application to the real-world, it can manage tasks that humans would find too difficult or time-consuming after introducing their application to real-world.

Below are the strategies that determine the impact of AI model application in businesses:

1. Collecting Data for AI Model Training

When competitors lack or have limited access to data, or when obtaining it is difficult, the ability to collect data for AI model training is incredibly valuable. Businesses can use data for AI model training and re-train (upgrade) existing models on an ongoing basis. There are a variety of means to collect data including online scraping and the deployment of sensors or cameras. Access to massive volumes of data, in general, encourages the development of higher-performing AI models and, as a result, the construction of competitive advantages.

2. Generate New Data with AI Models

Using a Generative Adversarial Network (GAN), for example, a model can produce fresh data that is similar to the training data. Based on a training data set, a GAN undergoes the process of learning to develop new data with statistics like the training set. With this in mind, the GAN can create images, videos, or text as data.

Artistic and photorealistic images can now be created using new generative AI models (such as DALL-E 2). AI models can also be used to generate entirely new data sets (synthetic data) or artificially bloat existing data (data augmentation) in order to train more robust algorithms.

3. Analyze Existing Data with AI Models

Model inference is the practice of using a model to make predictions on new data. For example, if your machine learning model is trained to recognize cars in images, model inference would allow the model to recognize cars in fresh images, which it has never seen before.

4. Automate Tasks with AI Model Training

In order to be employed in business, AI model training is incorporated into pipelines. A pipeline includes phases such as data collecting, transformation, data analysis, and data output.

In computer vision applications, a vision pipeline collects the video stream and performs image processing before feeding individual images into the DL model. This can be used in industry, for example, to automate visual inspection or to count bottles on conveyor belts.

Overall, models based on AI can help businesses become more efficient, competitive, and profitable by helping them to make better data-driven decisions.

C. Common AI Models and Their Data Extraction Applications

1. Linear Regression Model

Data scientists who work with statistics often use this AI model. In linear regression, supervised learning is the basis. Based on its training, AI models can recognize relationships between inputs and outputs. Based on the value of an independent variable, this model can predict a dependent variable’s value. As a result, these models are employed in a variety of industries, including healthcare, insurance, eCommerce, and banking.

2. Logistic Regression

Logistic regression is a popular model to resolve the issues related to binary classification. Being a statistical model, it predicts the class of a dependent variable given a set of independent variables.

It closely resembles the linear regression model. In contrast to the linear regression model, the logistic regression model is only employed to address classification-based problems.

3. Deep Neural Networks (DNN)

Deep Neural Networks (DNN), an Artificial Neural Network (ANN) with several (hidden) layers between the input and output layers is one of the most common AI/AL models. These are based on interconnected units known as artificial neurons and are inspired by the human brain’s neural network.

Deep neural networks have been widely used in mobile app development to enable image and speech recognition, as well as natural language processing capabilities. Neural networks are also used to power computer vision applications.

4. Decision Tree

This AI paradigm is simple to grasp and extremely effective. To make a decision, the decision tree considers available evidence from previous decisions. If/then structures are widely used in these trees. You won’t have to buy lunch if you make a sandwich at home, for example.

Both regression and classification problems can be solved using decision trees. Furthermore, early versions of predictive analytics were driven by simple decision trees.

5. Random Forest

Random Forest is an ensemble learning model that solves issues related to regression and classification. To create the final forecast, it employs numerous decision trees and the bagging approach.

Basically, it produces a ‘forest’ of several decision trees, each trained on a different sample of data, and then combines the results to deliver more accurate predictions.

D. How APISCRAPY AI Model Improves Data Extraction Speed and Accuracy?

Artificial intelligence (AI) has the potential to revolutionize the speed and accuracy of data extraction operations. Also, AI improves data extraction in numerous ways by utilizing modern algorithms and machine learning models.

1. Automated Pattern Recognition

AI algorithms can easily detect patterns in data sources. As a result, they can quickly locate key data points, greatly accelerating the extraction process.

2. Real-Time Analysis of Scraped Data

APISCRAPY’s AI model can analyze data as it is extracted in real time. Due to this real-time analysis, the time between data capture and actionable findings is reduced.

3. Learning and Adaption

AI training models learn and adapt based on prior extraction data. They become more efficient at extracting useful data when they find similar patterns, progressively quickening the process.

4. Handling Dynamic Content

Many modern websites employ dynamic content created by JavaScript. AI can interact with and extract data from these dynamic sections while maintaining extraction speed.

5. Efficient Resource Allocation

AI algorithms optimize the allocation of computational resources, preventing bottlenecks and ensuring efficient use for quick extraction.

6. Cognitive Automation

AI replicates human actions when engaging with data sources, navigating webpages, and interacting with interfaces. This automation expedites the process by eliminating manual stages.

7. Customizable Extraction Rules

Configuring AI-powered solutions enables extracting certain data elements based on user-defined rules, reducing the need for human filtering.

8. Optimization of Preprocesses

AI can automate data preprocessing processes such as cleaning and formatting, ensuring that the extracted data is immediately available for analysis.

9. Reduction in Intervention

As a result of automating extraction operations, AI reduces the need for human intervention, saving time and decreasing errors.

10. Scalability

Artificial intelligence-powered data extraction methods are very scalable. They can handle rising data quantities while maintaining speed and accuracy.

AI Training Data Sets: The Foundation of Machine Learning

A. What are AI Training Data Sets?

AI training data sets are the foundation of machine learning, serving as the basis for building, refining, and fine-tuning machine learning models. These data sets are groups of data samples that are used to train machine learning algorithms to produce predictions, classifications, or choices.

B. How Web Scraping Augments AI Training DataSets?

Web scraping is essential for augmenting training data sets with real-world, dynamic, and diverse data. Here’s how web scraping helps to supplement training data sets:

1. Continuous Data Updates

Using web scraping, you can continuously acquire new data from online sources. This continuous approach guarantees that AI training data sets are current and relevant.

2. Real-World Relevance

Data scraped from the internet represents real-world events, trends, and changes. This real-time relevance is critical for AI training data sets used in applications that demand current information, like sentiment analysis in the news or stock market prediction.

3. Data Variety

Web scraping can collect data in a variety of formats, including text, photos, videos, and others. This variety broadens the spectrum of training data, making it more reflective of real-world problems.

4. Data Volume Scaling

Web scraping may collect massive volumes of data from numerous websites and sources. And so, this scalability increases the amount of data accessible for training, which is very important for deep learning models.

5. Dynamic Content Management

Many websites use dynamic material that is constantly changing. Web scraping is capable of interacting with and extracting data from dynamic elements, getting the most recent information.

6. User-Generated Content

User-generated content, such as reviews, comments, and social network posts, can be accessed by web scraping. This type of data contains useful information and can be utilized in AI model training for sentiment analysis and user behavior prediction.

8. Data Acquisition in Multiple Languages

Web scraping may collect data from websites in many languages, allowing AI model training for multilingual applications such as language translation and worldwide market analysis to be trained.

9. Efficiency and Cost-cutting Measures

When compared to manual data entry or surveys, web scraping automates the data collection process, saving time and resources. This efficiency enables the collection of more data at a reduced cost.

10. Customization and Targeted Data Collection

Web scraping can be customized to collect specific sorts of data from websites, allowing data scientists to focus their efforts on acquiring information that is most relevant to their models.

11. Data Preprocessing

Web scraped data frequently necessitates preprocessing, such as cleaning and formatting. This phase ensures that the data is ready for training without the need for manual intervention.

To summarize, online scraping delivers a steady stream of diverse, real-time data that supplements and expands AI training data sets. This enhancement is extremely useful for machine learning and data science applications that demand current, real-world data as well as the capacity to adapt to changing situations.

Web Data Extraction: Leave Everything to AI or Combine It with Human Interaction

With such a large amount of data to analyze, there is nothing wrong with using artificial intelligence to acquire data.

We have already entered the age of artificial intelligence, in which sophisticated software is utilized to create machine intelligence that learns, adapts, and collects data and is employed in a wide range of applications. AI has enabled people to work in circumstances where humans would perish, such as the military, security, and so on.

In conclusion, while AI website scraper is a powerful tool, businesses should approach it with awareness of its limitations. Combining AI with human oversight, legal compliance, and regular monitoring can help mitigate these challenges and ensure accurate and ethical data extraction. At APISCRAPY, we combine AI web scraping with human inspection and ensure that the data is relevant and accurate prior to delivery. Why wait? Embrace the AI web scraping and extract your data seamlessly.

FAQs on AI Web Scraping

1. Can AI website scrapers bypass CAPTCHAs?

Some advanced AI website scrapers such as APISCRAPY can bypass CAPTCHAs, although this may not be legal in many circumstances. Make that you are aware of the legal ramifications.

2. Are there any free AI website scrapers?

There are free and open-source AI internet scrapers available, although they may have drawbacks when compared to premium options.

3. What kinds of data can AI website scrapers extract?

Text, photos, pricing, product details, and other data can be extracted using AI website scrapers.

4. How can I assure that the data I scrape is correct?

You may ensure accuracy by validating scraped data, implementing error handling, and periodically updating your scraping scripts. Also, with APISCRAPY the combination of AI and human expertise ensures data accuracy.

5. Are there any ethical concerns to be aware of when using AI website scrapers?

Respecting website terms of service, not overloading servers, and not exploiting scraped data for illegal or harmful reasons are all ethical considerations.

Jyothish Chief Data Officer

A visionary operations leader with over 14+ years of diverse industry experience in managing projects and teams across IT, automobile, aviation, and semiconductor product companies. Passionate about driving innovation and fostering collaborative teamwork and helping others achieve their goals.

Certified scuba diver, avid biker, and globe-trotter, he finds inspiration in exploring new horizons both in work and life. Through his impactful writing, he continues to inspire.

AIMLEAP Automation Practice

APISCRAPY is a scalable data scraping (web & app) and automation platform that converts any data into ready-to-use data API. The platform is capable to extract data from websites, process data, automate workflows and integrate ready to consume data into database or deliver data in any desired format. APISCRAPY practice provides capabilities that help create highly personalized digital experiences, products and services. Our RPA solutions help customers with insights from data for decision-making, improve operations efficiencies and reduce costs. To learn more, visit us www.apiscrapy.com

Related Articles

How to Scrape Data from Zillow?

How to Scrape Data from Zillow? GET A FREE QUOTE Expert Panel AIMLEAP Center Of Excellence AIMLEAP Automation Works Startups | Digital | Innovation| Transformation Author Jyothish Estimated Reading Time 9 min AIMLEAP Automation Works Startups | Digital | Innovation|...

How to scrape indeed? Step-by-Step Guide

How to scrape indeed? Step-by-Step Guide GET A FREE QUOTE Expert Panel AIMLEAP Center Of Excellence AIMLEAP Automation Works Startups | Digital | Innovation| Transformation Author Jyothish Estimated Reading Time 9 min AIMLEAP Automation Works Startups | Digital |...

Mastering Real Estate Data – The Ultimate Guide to APISCRAPY’s Free Zillow Scraper

Mastering Real Estate Data – The Ultimate Guide to APISCRAPY’s Free Zillow Scraper GET A FREE QUOTE Expert Panel AIMLEAP Center Of Excellence AIMLEAP Automation Works Startups | Digital | Innovation| Transformation Author Jyothish Estimated Reading Time 9 min AIMLEAP...